Safer Internet Day

The internet wasn’t built with children in mind, but it is today’s children who will shape its future. By giving young people the tools to navigate the online world with awareness and confidence we can will help them to better identify and understand the harms that can occur.

Giving children this critical understanding at a young age not only impacts the decisions they make now but can also shape the decisions they will make as the entrepreneurs and problem solvers of the future.

By empowering young people with the skills and mindset to build a fairer, more inclusive and safer technology sector, we will create a brighter technology future for us all.

We strongly believe in the ‘swimming pool’ model – don’t teach kids to stay away from the water, but instead help them stay safe by teaching them to swim. We want them to use technology and the internet, to embrace it and make things with it, but also be safe when they do so.

An important part of creating a safer internet for our children is to give them greater confidence to question what they see and do online and empower them to make the right decisions and know who to turn to when they don’t or are unsure of what to do.

Machine learning

One of the ways to do this is to get ‘under the hood’ of young people’s online world and show them how it is shaped. In our Machine Learning courses, we want young people to see that machine learning affects their lives in very fundamental ways and to have informed discussions as users of these technologies.

We focus on examples that are highly relevant to their world. For example, we cover recommendations systems, the algorithms that surface music, films and videos through our favourite streaming platforms, as well as filter bubbles and echo chambers on popular websites such as YouTube.

Olivia, an Apps for Good student from Dunoon Grammar School in Scotland said, ‘I had not heard of machine learning, but once it was explained to us, it was clear that machine learning was already part of my life and I had already been encountering it through social media and services such as Netflix.’

Ethical concerns

A powerful discussion for students involves looking at the benefits and potential harms of facial recognition used by police in the UK and by schools in China to monitor student attentiveness. Students also watch a TED Talk from Joy Buolamwini that explores how as an MIT grad student she discovered the facial analysis software she was working on didn’t detect her own face because the people who coded the algorithm hadn’t taught it to identify a broad range of skin tones and facial structures.

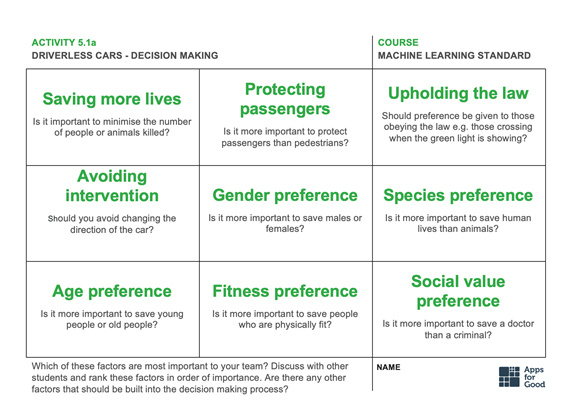

Another insightful exercise for young people is MIT’s moral machine (https://www.moralmachine.net/), a platform for gathering a human perspective on moral decisions made by machine intelligence, such as driverless cars. In this exercise, students are asked to be the judge if a driverless car needs to choose the ‘lesser’ of two evils, such as one passenger versus five pedestrians being injured. Students follow this with a group discussion on what factors to prioritise and whether it is ethical to pre-programme cars to make these decisions.

Reimagining the internet of the future

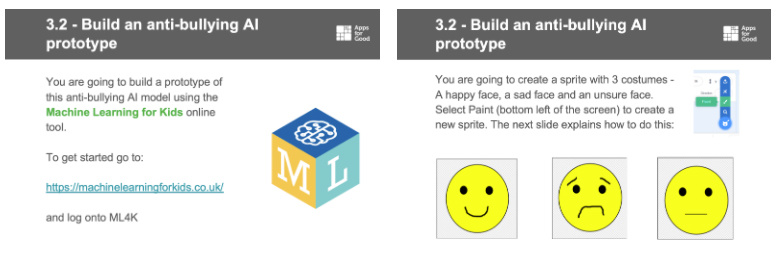

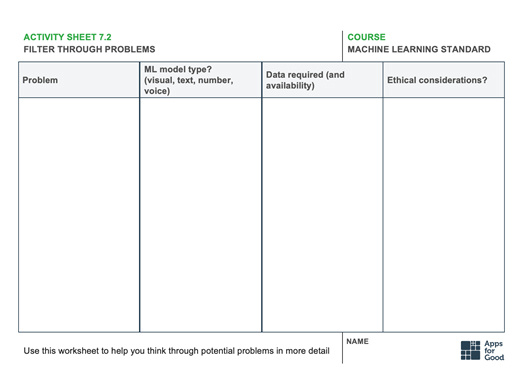

One of the first exercises in our Machine Learning course is for young people to recreate Instagram’s anti-bullying algorithm using the Scratch-based programme Machine Learning for Kids. Students have the chance to train their own model to detect bullying, by ‘teaching’ the model both nice and inappropriate phrases and then seeing how well their model copes when provided with phrases at random. Through this exercise, young people can see how companies are using machine learning to try to protect users at scale, as well as the role they would play as the product developer selecting the data sources that shape the technology’s effectiveness and therefore the safety and wellbeing of Instagram’s users.

Students then are asked to put this learning into action when they build their own products. Now they are in control, and have the opportunity to drive the creation of their own product in a way that puts their users’ needs at the heart of their product using a more inclusive design process.

A great example of this is with the I’m Okay app created by Josie, Emily, Katie and Alexandra, students at Stratford Girls’ Grammar School in Warwickshire, West Midlands. The app supports young people who are exploring their sexuality and gender, with real-life stories from people who’ve been there alongside resources and support services.

Making a contribution to society

When building the app, the students had to think in-depth about how very personal and sensitive information would be shared in an anonymous way, how any kind of social interaction might work to create a safe and open environment, and how that would be monitored by the I’m Okay team.

Ultimately, we want young people to feel empowered in their role in shaping a better online world today and in the future.Bob Schukai, SVP – Identity Solutions at Mastercard, reflected on his experience of Apps for Good students that they had invited on placement within the organisation.

‘It’s not about “What are you going to pay me?” but what are we, as a company, doing to make the world a better place. I do think that companies are much more aware of the role they must play in contributing to society. This is a direct result of the questions these kids were asking, and the expectations they had about the company they would be working for. I feel good knowing that they are now in the workforce asking how we can make people’s lives better.’

Apps for Good is an independent charity offering free technology courses. Since 2001 they’ve worked with teachers to unlock the potential of over 200,000 students around the UK, and beyond. Apps for Good courses encourage students to think about the world around them and solve the problems that they find by creating apps and products with machine learning and IoT. They have a range of free courses for schools to deliver to students aged 10-18: https://www.appsforgood.org/courses.

Register for free

No Credit Card required

- Register for free

- Free TeachingTimes Report every month